Tutorial de Geração Aumentada por Recuperação (RAG): Arquitetura, Implementação e Guia de Produção

Do RAG básico à produção: chunking, busca vetorial, reranking e avaliação em um único guia.

Este tutorial de Geração Aumentada por Recuperação (RAG) é um guia passo a passo, focado em produção, para construir sistemas RAG do mundo real.

Se você está procurando:

- Como construir um sistema RAG

- Arquitetura RAG explicada

- Tutorial RAG com exemplos

- Como implementar RAG com bancos de dados vetoriais

- RAG com reclassificação (reranking)

- RAG com pesquisa na web

- Melhores práticas de RAG em produção

Você está no lugar certo.

Este guia consolida conhecimento prático sobre implementação de RAG, padrões arquitetônicos e técnicas de otimização usadas em sistemas de IA em produção.

Mapa do Cluster RAG (Leia nesta Ordem)

Se você quer o caminho mais rápido através do cluster RAG, use este mapa:

- Você está aqui: Visão geral do RAG + pipeline de ponta a ponta (esta página)

- Fragmentação (base da qualidade de recuperação): Estratégias de Fragmentação (Chunking) no RAG

- Embeddings de texto (APIs e Python): Embeddings de texto para RAG e pesquisa — endpoints de embedding compatíveis com Ollama e OpenAI, formato de recuperação, links para adiante

- Armazenamentos vetoriais (escolhas de armazenamento + indexação): Comparação de Armazenamentos Vetoriais para RAG

- Profundidade de recuperação (quando a “pesquisa” não é suficiente): Pesquisa vs DeepSearch vs Pesquisa Profunda

- Reclassificação (frequentemente o maior ganho de qualidade): Reclassificação com Modelos de Embedding

- Embeddings + modelos de reclassificação (implementações práticas):

- Arquiteturas avançadas: Variantes Avançadas de RAG: LongRAG, Self-RAG, GraphRAG

- Recuperação de grafo + vetor (GraphRAG em um banco de dados de grafos): Banco de dados de grafos Neo4j para GraphRAG, instalação, Cypher, vetores, operações — grafos de propriedades, índices vetoriais e neo4j-graphrag em um só lugar

O que é Geração Aumentada por Recuperação (RAG)?

A Geração Aumentada por Recuperação (RAG) é um padrão de design de sistema que combina:

- Recuperação de informações

- Aumento de contexto

- Geração de modelos de linguagem grandes

Em termos simples, um pipeline RAG recupera documentos relevantes e os injeta no prompt antes que o modelo gere uma resposta.

Diferente do ajuste fino (fine-tuning), o RAG:

- Funciona com dados atualizados frequentemente

- Suporta bases de conhecimento privadas

- Reduz alucinações

- Evita o retreinamento de modelos grandes

- Melhora o enraizamento das respostas

Os sistemas RAG modernos incluem mais do que apenas busca vetorial. Uma implementação completa de RAG pode incluir:

- Reescrita de consultas

- Pesquisa híbrida (BM25 + busca vetorial)

- Reclassificação com cross-encoder

- Recuperação em múltiplos estágios

- Integração de pesquisa na web

- Avaliação e monitoramento

Blueprint Mínimo de RAG em Produção (Implementação de Referência)

Use isso como um modelo mental (e um esqueleto inicial) para RAG em produção.

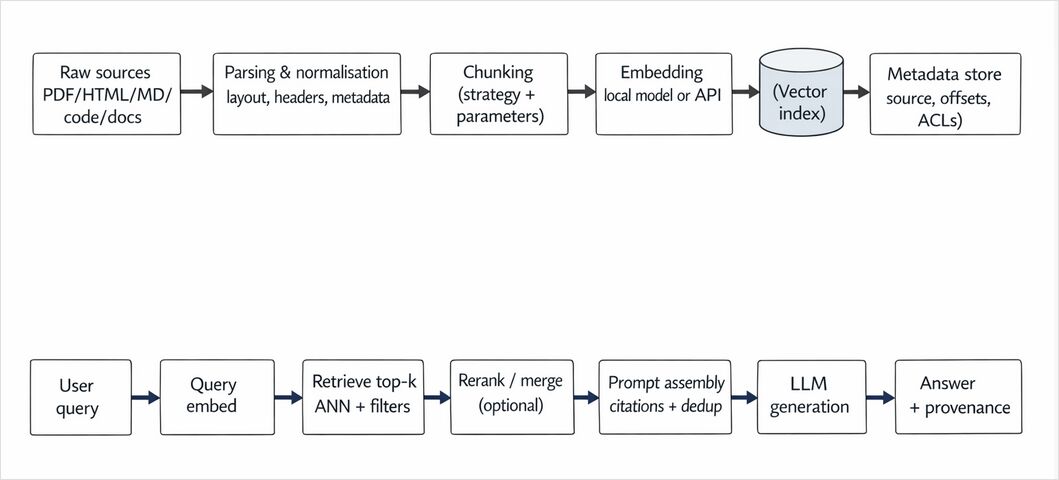

Pipeline de ingestão (offline ou contínuo)

- Colete fontes (documentos, tickets, páginas web, PDFs, código)

- Normalize (extraia texto, limpe texto padrão, desduplicação)

- Fragmente (escolha estratégia + sobreposição + metadados)

- Embeba (embeddings versionados)

- Insira ou atualize no índice (armazenamento vetorial + campos de metadados)

- Estratégia de reindexação quando embeddings ou fragmentação mudarem

Pipeline de consulta (online)

- Parse / reescreva a consulta (opcional)

- Recupere candidatos (vetorial ou híbrido + filtragem de metadados)

- Reclassifique os top-K com um modelo cross-encoder / reclassificador

- Monte o contexto (desduplicação, ordenação por relevância, adição de citações)

- Gere com prompt fundamentado (regras + comportamento de recusa)

- Registre (conjunto de recuperação, conjunto reclassificado, contexto final, latência, custo)

- Avalie (harness online/offline)

Se você melhorar apenas uma coisa em um sistema RAG funcional: adicione reclassificação e um harness de avaliação.

Tutorial de RAG Passo a Passo: Como Construir um Sistema RAG

Esta seção descreve um fluxo prático de tutorial RAG para desenvolvedores.

Passo 1: Prepare e Fragmente Seus Dados

A qualidade da recuperação depende fortemente da estratégia de fragmentação e do design de indexação: um bom RAG começa com uma fragmentação adequada.

A fragmentação determina:

- Recall de recuperação

- Latência

- Ruído de contexto

- Custo de tokens

- Risco de alucinação

Estratégias comuns de fragmentação RAG incluem:

- Fragmentação de tamanho fixo

- Fragmentação com janela deslizante

- Fragmentação semântica

- Fragmentação recursiva

- Fragmentação hierárquica

- Fragmentação consciente de metadados

Uma fragmentação ruim é uma das causas mais comuns de sistemas RAG com desempenho inferior.

Para uma análise profunda, focada em engenharia, sobre compensações de fragmentação, dimensões de avaliação, matrizes de decisão e implementações Python executáveis, veja:

Estratégias de Fragmentação em RAG: Alternativas, Compensações e Exemplos

Esse guia cobre padrões práticos para:

- Sistemas de Perguntas e Respostas

- Pipelines de resumo

- Pesquisa de código

- Documentos multimodais

- Ingestão em streaming

- Documentos multimodais com embeddings cross-modais

Se você leva a sério o desempenho do RAG, leia isso antes de ajustar embeddings ou reclassificação.

Para sistemas RAG multimodais que conectam texto, imagens e outras modalidades, explore Embeddings Cross-Modal: Conectando Modalidades de IA

Passo 2: Escolha um Banco de Dados Vetorial para RAG

Um banco de dados vetorial armazena embeddings para pesquisa de similaridade rápida.

Compare bancos de dados vetoriais aqui:

Armazenamentos Vetoriais para RAG - Comparação

Ao selecionar um banco de dados vetorial para um tutorial RAG ou sistema em produção, considere:

- Tipo de índice (HNSW, IVF, etc.)

- Suporte a filtragem

- Modelo de implantação (nuvem vs auto-hospedado)

- Latência de consulta

- Escalabilidade horizontal

- Requisitos de multitenancy e controle de acesso

Passo 3: Implemente a Recuperação (Busca Vetorial ou Híbrida)

A recuperação básica de RAG usa similaridade de embeddings.

A recuperação avançada de RAG usa:

- Pesquisa híbrida (vetor + palavra-chave)

- Filtragem de metadados

- Recuperação de múltiplos índices

- Reescrita de consultas

Para fundamentação conceitual:

Pesquisa vs DeepSearch vs Pesquisa Profunda

Compreender a profundidade de recuperação é essencial para pipelines RAG de alta qualidade.

Passo 4: Adicione Reclassificação ao Seu Pipeline RAG

A reclassificação é frequentemente a maior melhoria de qualidade em uma implementação RAG.

A reclassificação melhora:

- Precisão

- Relevância do contexto

- Fidedignidade

- Relação sinal-ruído

Aprenda técnicas de reclassificação:

- Reclassificação com Modelos de Embedding

- Qwen3 Embedding + Qwen3 Reranker no Ollama

- Reclassificação com Ollama + Qwen3 Embedding (Go)

- Reclassificação com Ollama + Qwen3 Reranker em Go

Em sistemas RAG em produção, a reclassificação muitas vezes importa mais do que mudar para um modelo maior.

Passo 5: Integre Pesquisa na Web (Opcional, mas Poderoso)

A pesquisa na web aumentada pelo RAG permite recuperação dinâmica de conhecimento.

A pesquisa na web é útil para:

- Dados em tempo real

- Assistentes de IA conscientes de notícias

- Inteligência competitiva

- Resposta a perguntas de domínio aberto

Veja implementações práticas:

Passo 6: Construa um Framework de Avaliação RAG

Um tutorial RAG sério deve incluir avaliação. Sem isso, otimizar um sistema RAG torna-se um trabalho de adivinhação.

O que medir

| Camada | O que medir | Por que importa |

|---|---|---|

| Ingestão | cobertura de fragmentos, taxa de duplicatas, versão de embedding | previne desvio silencioso |

| Recuperação | recall@k, precision@k, MRR/NDCG | diz se você está buscando as evidências corretas |

| Reclassificação | delta em precision@k vs baseline | valida o ROI do reclassificador |

| Geração | fidedignidade / enraizamento, precisão de citações, qualidade de recusa | reduz alucinação |

| Sistema | latência p50/p95, custo por consulta, taxa de acerto de cache | mantém a produção usável |

Harness de avaliação mínimo (lista de verificação prática)

- Construa um conjunto de teste de consultas (consultas de usuários reais, se possível)

- Para cada consulta, armazene:

- resposta esperada ou fontes esperadas

- fontes permitidas (documentos ouro) quando disponíveis

- Execute um lote offline:

- recupere candidatos

- reclassifique

- gere

- pontue (recuperação + geração)

- Acompanhe métricas ao longo do tempo e falhe na build em caso de regressões (mesmo pequenas)

Comece simples: 50–200 consultas são suficientes para detectar regressões maiores.

Arquiteturas RAG Avançadas

Depois de entender o RAG básico, explore padrões avançados:

Variantes Avançadas de RAG: LongRAG, Self-RAG, GraphRAG

Arquiteturas avançadas de Geração Aumentada por Recuperação permitem:

- Raciocínio multi-hop

- Recuperação baseada em grafos

- Loops de autocorreção

- Integração de conhecimento estruturado

Para GraphRAG e recuperação de grafos de conhecimento onde você combina travessia de grafo com similaridade vetorial em um único sistema, veja Banco de dados de grafos Neo4j para GraphRAG, instalação, Cypher, vetores, operações (instalação, Cypher, índices vetoriais, recuperação híbrida e o pacote Python neo4j-graphrag).

Essas arquiteturas são essenciais para sistemas de IA de nível empresarial.

Quando o RAG Falha (E Como Corrigir)

A maioria das falhas de RAG é diagnosticável se você olhar camada por camada no pipeline.

- Retorna contexto irrelevante → melhore a fragmentação, adicione filtros de metadados, implemente pesquisa híbrida, ajuste K.

- Recupera os documentos certos, mas responde incorretamente → adicione reclassificação, reduza o ruído de contexto, melhore as regras de enraizamento do prompt.

- Alucina apesar de bons documentos → exija citações, adicione comportamento de recusa, adicione pontuação de fidedignidade, reduza a temperatura “criativa”.

- É lento/carro → cacheie recuperação + embeddings, reduza K de reclassificação, limite contexto, loteie embeddings, ajuste parâmetros de índice ANN.

- Vaza dados entre tenants → implemente filtragem de ACL na hora da recuperação (não apenas no prompt), use índices separados ou partições por tenant.

Erros Comuns na Implementação de RAG

Erros comuns em tutoriais RAG para iniciantes incluem:

- Usar fragmentos de documentos excessivamente grandes

- Pular a reclassificação

- Sobrecarregar a janela de contexto

- Não filtrar metadados

- Sem harness de avaliação

Corrigir isso melhora dramaticamente o desempenho do sistema RAG.

RAG vs Ajuste Fino (Fine-Tuning)

Em muitos tutoriais, RAG e ajuste fino são confundidos. Use este guia de decisão:

| Você deve preferir… | Quando… |

|---|---|

| RAG | o conhecimento muda frequentemente; você precisa de citações/auditoria; você tem documentos privados; quer atualizações rápidas sem retreinamento |

| Ajuste Fino | você precisa de tom/comportamento consistente; quer que o modelo siga um guia de estilo de domínio; seu conhecimento é relativamente estático |

| Ambos | você precisa de comportamento de domínio e conhecimento fresco/privado (comum em produção) |

Use RAG para:

- Recuperação de conhecimento externo

- Dados atualizados frequentemente

- Menor risco operacional

Use ajuste fino para:

- Controle comportamental

- Consistência de tom/estilo

- Adaptação de domínio quando os dados são estáticos

A maioria dos sistemas de IA avançados combina Geração Aumentada por Recuperação com ajuste fino seletivo.

Melhores Práticas de RAG em Produção

Se você está indo além de um tutorial RAG para produção:

Recuperação + qualidade

- Use recuperação híbrida

- Adicione reclassificação

- Use filtragem de metadados e desduplicação

- Acompanhe métricas de recuperação (recall@k / precision@k) continuamente

Custo + latência (não pule isso)

- Cacheie:

- Cache de embeddings (texto idêntico → embedding idêntico)

- Cache de recuperação (consultas populares)

- Cache de respostas (para workflows determinísticos)

- Ajuste parâmetros de índice ANN (HNSW/IVF) e operações em lote

- Controle o uso de tokens: contexto menor, menos candidatos, prompts estruturados

Segurança + privacidade

- Faça controle de acesso no momento da recuperação (filtros ACL / partições por tenant)

- Redata ou evite indexar PII (dados pessoais identificáveis) sempre que possível

- Registre com segurança (evite armazenar prompts sensíveis brutos, a menos que necessário)

Disciplina operacional

- Versione seus embeddings e estratégia de fragmentação

- Automatize pipelines de ingestão

- Monitore métricas de alucinação/fidedignidade

- Acompanhe custo por consulta

A Geração Aumentada por Recuperação não é apenas um conceito de tutorial - é uma disciplina de arquitetura de produção.

Pensamentos Finais

Este tutorial RAG cobre implementação para iniciantes e design de sistemas avançados.

A Geração Aumentada por Recuperação é a espinha dorsal das aplicações modernas de IA.

Dominar a arquitetura RAG, reclassificação, bancos de dados vetoriais, pesquisa híbrida e avaliação determinará se seu sistema de IA permanecerá um demo - ou se tornará pronto para produção.

Este tópico continuará expandindo conforme os sistemas RAG evoluem.