AI Systems: Self-Hosted Assistants, RAG, and Local Infrastructure

Most local AI setups start with a model and a runtime.

Most local AI setups start with a model and a runtime.

What actually happens when you run Ultrawork.

Oh My Opencode promises a “virtual AI dev team” — Sisyphus orchestrating specialists, tasks running in parallel, and the magic ultrawork keyword activating all of it.

OpenCode LLM test — coding and accuracy stats

I have tested how OpenCode works with several locally hosted on Ollama LLMs, and for comparison added some Free models from OpenCode Zen.

Meet Sisyphus and its specialist agent crew.

The biggest capability jump in OpenCode comes from specialised agents: deliberate separation of orchestration, planning, execution, and research.

OpenHands CLI QuickStart in minutes

OpenHands is an open-source, model-agnostic platform for AI-driven software development agents. It lets an agent behave more like a coding partner than a simple autocomplete tool.

Self-host OpenAI-compatible APIs with LocalAI in minutes.

LocalAI is a self-hosted, local-first inference server designed to behave like a drop-in OpenAI API for running AI workloads on your own hardware (laptop, workstation, or on-prem server).

Install Oh My Opencode and ship faster.

Oh My Opencode turns OpenCode into a multi-agent coding harness: an orchestrator delegates work to specialist agents that run in parallel.

How to Install, Configure, and Use the OpenCode

I keep coming back to llama.cpp for local inference—it gives you control that Ollama and others abstract away, and it just works. Easy to run GGUF models interactively with llama-cli or expose an OpenAI-compatible HTTP API with llama-server.

Artificial Intelligence is reshaping how software is written, reviewed, deployed, and maintained. From AI coding assistants to GitOps automation and DevOps workflows, developers now rely on AI-powered tools across the entire software lifecycle.

Airtable - Free plan limits, API, webhooks, Go & Python.

Airtable is best thought of as a low‑code application platform built around a collaborative “database-like” spreadsheet UI - excellent for rapidly creating operational tooling (internal trackers, lightweight CRMs, content pipelines, AI evaluation queues) where non-developers need a friendly interface, but developers also need an API surface for automation and integration.

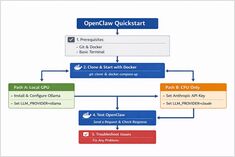

How to Install, Configure, and Use the OpenCode

OpenCode is an open source AI coding agent you can run in the terminal (TUI + CLI) with optional desktop and IDE surfaces. This is the OpenCode Quickstart: install, verify, connect a model/provider, and run real workflows (CLI + API).

Monitor LLM with Prometheus and Grafana

LLM inference looks like “just another API” — until latency spikes, queues back up, and your GPUs sit at 95% memory with no obvious explanation.

Install OpenClaw locally with Ollama

OpenClaw is a self-hosted AI assistant designed to run with local LLM runtimes like Ollama or with cloud-based models such as Claude Sonnet.

OpenClaw AI Assistant Guide

Most local AI setups start the same way: a model, a runtime, and a chat interface.

End-to-end observability strategy for LLM inference and LLM applications

LLM systems fail in ways that traditional API monitoring cannot surface — queues fill silently, GPU memory saturates long before CPU looks busy, and latency blows up at the batching layer rather than the application layer. This guide covers an end-to-end observability strategy for LLM inference and LLM applications: what to measure, how to instrument it with Prometheus, OpenTelemetry, and Grafana, and how to deploy the telemetry pipeline at scale.

Comparison of Chunking Strategies in RAG

Chunking is the most under-estimated hyperparameter in Retrieval ‑ Augmented Generation (RAG): it silently determines what your LLM “sees”, how expensive ingestion becomes, and how much of the LLM’s context window you burn per answer.