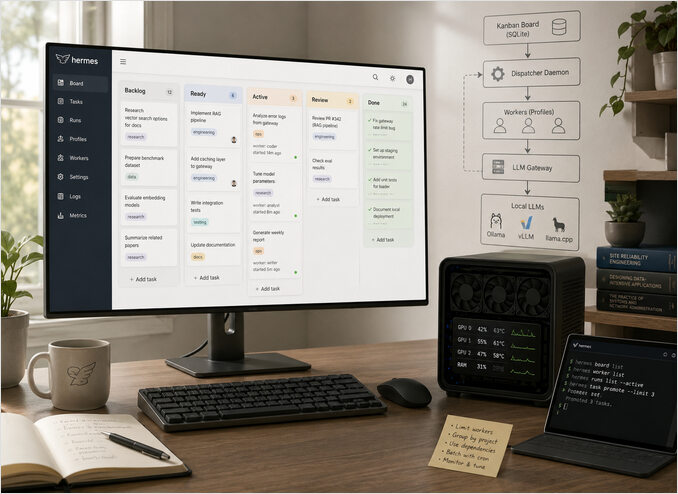

Kanban in Hermes Agent for Self Hosted LLM Workflows

Control Hermes Kanban load on your self hosted LLM.

Hermes Agent ships with a Kanban style task board that can easily saturate your self hosted LLM gateway if you let every task run at once.

Hermes Kanban is a durable multi profile board backed by ~/.hermes/kanban.db.

Every lane represents a phase of work and every card is a task that can be claimed by a specific Hermes profile.

Out of the box the dispatcher daemon will happily spin up agents for any number of ready tasks which is great for cloud APIs but dangerous when you have a small cluster of self hosted GPUs.

If you are new to this stack, start with the broader Hermes setup and operations guide and the AI Systems pillar for surrounding architecture.

This post shows how to:

- Understand how Hermes Kanban and the dispatcher interact with your LLM gateway.

- Configure sequential or limited parallel execution for heavy tasks.

- Batch work with cron so night jobs do not collide with daytime interactive use.

- Monitor and tune the system so your GPUs stay busy but never overloaded.

How Hermes Kanban and the dispatcher work

At a high level the system has three layers:

- The board — a durable SQLite database storing tasks, columns, relations and history.

- Workers — Hermes profiles that can be started in isolated workspaces to process a task.

- Dispatcher daemon — a long lived process that watches the board and promotes tasks to runs.

When you create a task using the CLI or dashboard it usually starts in a backlog or ready column.

The dispatcher periodically scans for tasks that meet your filters, atomically claims a card and spawns the assigned profile with the right tools and memory.

Each worker then talks to your LLM gateway or local runtime — for example an OpenAI compatible proxy fronting Ollama, vLLM or llama.cpp. For deployment choices across these runtimes, use the LLM Hosting in 2026: Local, Self-Hosted and Cloud Infrastructure Compared. If you are tuning request fan-out on Ollama itself, this pairs well with How Ollama Handles Parallel Requests.

If you do nothing else and you add fifty heavy research tasks, the dispatcher may try to start all fifty, flooding your gateway with concurrent requests. On a single GPU or CPU bound node this produces queueing, thrashing and timeouts instead of throughput.

Strategy 1 Reduce concurrency at the dispatcher

The simplest control is to cap how many workers the dispatcher can run in parallel. In your Hermes configuration you will typically see a section that controls the Kanban dispatcher and worker pool.

In current Hermes releases the dispatcher is embedded in hermes gateway start by default, and the same claim and spawn kernel enforces concurrency caps there as well.

However, users do report odd behaviour that can look like limit drift, so validate the runtime conditions before blaming the cap itself.

A minimal example using a TOML style config might look like this:

[kanban.dispatcher]

enabled = true

poll_interval_seconds = 10

max_active_tasks = 3

[kanban.workers]

profile = "researcher"

max_parallel_per_profile = 2

If your version uses different key names, keep the same intent when mapping the settings. The command names and operator flows are summarized in the Hermes Agent CLI cheat sheet:

- one limit for total active tasks across the board

- one limit for per profile or per worker pool parallelism

- optional dispatch tick interval

Known gotchas from docs and community threads:

- Do not run both gateway embedded dispatch and legacy

hermes kanban daemon --forceon the samekanban.dbbecause that creates claim races. - If the gateway is down,

readytasks do not dispatch at all, then appear to burst when the gateway returns. - A long dispatch interval can feel like uneven scheduling because claims happen in ticks instead of continuously.

- Older Kanban builds had several run state and reclaim edge cases fixed across follow up patches, so behaviour may differ by version.

Quick verification checklist when behaviour looks wrong:

# 1) confirm exactly one dispatcher path is active

pgrep -af "hermes gateway start|hermes kanban daemon"

# 2) check gateway-mode dispatch flag

rg "dispatch_in_gateway|dispatch_interval_seconds" ~/.hermes/config.yaml

# 3) inspect queue shape

hermes kanban list --status ready

hermes kanban list --status active

Key ideas:

max_active_taskslimits how many Kanban cards can be in theactivestate at once.max_parallel_per_profileensures that even if you run many profiles only a small number of heavy ones run together.- With a small self hosted cluster you usually want values between 1 and 4 to keep latency predictable.

When you first deploy Hermes near your LLM gateway start conservative:

- Begin with

max_active_tasks = 1. - Observe GPU and CPU utilisation.

- Increase to 2 or 3 only if the hardware is clearly under used.

Strategy 2 Encode dependencies for strictly sequential flows

Some workflows should run strictly one after another — for example:

- multi step data pipelines with shared intermediate artefacts

- migrations or infrastructure changes

- batch jobs that write to the same object store or database

Hermes Kanban supports parent child dependencies between tasks so that a child card becomes dispatchable only when its parent is done.

You can model this with a small helper script around the Hermes CLI:

#!/usr/bin/env bash

set -euo pipefail

parent_id="$(hermes kanban add \

--title 'Ingest customer logs for April' \

--profile 'etl-worker' \

--column backlog)"

hermes kanban add \

--title 'Generate April anomaly report' \

--profile 'analytics-worker' \

--column backlog \

--parent "${parent_id}"

hermes kanban add \

--title 'Publish April summary to dashboard' \

--profile 'reporting-worker' \

--column backlog \

--parent "${parent_id}"

With an appropriate board policy and low dispatcher limits only the parent task runs first.

Once it finishes the child tasks gradually become ready, and the dispatcher pulls them one by one without ever exceeding your concurrency caps.

Strategy 3 Disable automatic dispatch and use cron

For some environments you may prefer explicit time windows for heavy workloads.

For example you might want research tasks and document ingestion to run after midnight while GPUs are idle.

There are two scheduling variants people call cron in this setup:

- Linux host cron using

crontab. - Hermes internal scheduler cron expressions in Hermes config.

Option A Linux host cron

In this model you disable automatic Kanban dispatch and let the OS schedule a wrapper script.

An example script:

#!/usr/bin/env bash

set -euo pipefail

MAX_TO_PROMOTE="${1:-3}"

ready_ids="$(hermes kanban list --column ready --limit "${MAX_TO_PROMOTE}" --quiet)"

if [ -z "${ready_ids}" ]; then

exit 0

fi

for id in ${ready_ids}; do

echo "Promoting task ${id} to active"

hermes kanban promote --id "${id}" --column active

done

Then add a Linux cron entry on the host where the dispatcher or gateway runs:

*/15 * * * * /opt/hermes/scripts/kanban-promote.sh 2 >> /var/log/hermes/kanban-cron.log 2>&1

This guarantees that no more than two new tasks become active every fifteen minutes regardless of how many cards you drop into ready.

Option B Hermes internal cron scheduler

If you prefer to keep scheduling rules inside Hermes itself, define a scheduled job with a cron expression in Hermes config and call the same promote command there.

Example pattern:

[kanban.dispatcher]

enabled = false

[[scheduler.jobs]]

name = "kanban-batch-promote"

cron = "*/15 * * * *"

command = "hermes kanban promote-batch --from ready --to active --max 2"

timezone = "Australia/Melbourne"

If your Hermes build exposes scheduler management commands, you can register the same job from CLI instead of editing config files manually:

hermes scheduler jobs add \

--name "kanban-batch-promote" \

--cron "*/15 * * * *" \

--timezone "Australia/Melbourne" \

--command "hermes kanban promote-batch --from ready --to active --max 2"

hermes scheduler jobs list

If your installed version does not have hermes scheduler jobs ..., keep using the config based method above, then reload or restart Hermes so the scheduler picks up the new job.

How to use this safely:

- Keep

kanban.dispatcher.enabled = falseif the scheduler job is your only promoter. - Start with a low

--maxvalue such as 1 or 2. - Put scheduler logs in your normal Hermes log pipeline so skipped or failed promotions are visible.

This gives you the same batching behaviour as Linux cron, but managed as Hermes config instead of host level crontab.

Strategy 4 Segment boards and profiles for different gateways

If you operate multiple LLM backends — for example:

- a small GPU node with a 7B instruct model

- a CPU only node for lightweight classification

- a separate high end box for heavy reasoning

you can reduce contention by assigning different Hermes profiles and boards to each gateway.

Typical pattern:

- Create profiles like research-gpu, summarise-cpu, ops-gateway.

- Configure each profile with a dedicated base URL and API key for its own gateway.

- Create separate Kanban lanes or even separate boards for each profile.

When combined with dispatcher caps this keeps each gateway saturated by its own set of workers without one noisy workload starving the others.

Hermes Kanban Monitoring and Tuning

Whichever strategy you choose you should monitor:

- LLM gateway metrics — request rate, latency, error rate, token throughput.

- Node health — GPU utilisation, VRAM usage, CPU load and RAM.

- Hermes metrics — how many tasks are in backlog, ready, active and done.

For production metric baselines and dashboards, see Monitor LLM Inference in Production with Prometheus and Grafana and the broader LLM Performance hub.

Start with low concurrency, then gradually raise limits while watching for:

- rising latency at constant throughput

- increasing timeout or rate limit errors

- long tails where some tasks stay active for a very long time

As soon as you see these symptoms roll back to the previous stable configuration and keep that as your default.

When Kanban is the right tool

Hermes Kanban shines when you have:

- long lived research or engineering backlogs

- multi agent collaboration with named profiles

- workflows that must survive restarts and host reboots

- humans who want a dashboard to triage work

If you only need a single run to create a few temporary helpers, the built in delegate task tools are usually simpler.

Once you need history, dashboards and strict control over how your agents hit self hosted LLMs the Kanban board plus dispatcher is the right foundation.

With a few configuration tweaks and optional cron based batching you can keep Hermes Kanban responsive while protecting your gateway and hardware.